Recently, Will Thomas and I gave a talk at Kin + Carta about caching, providing a simple high level overview of what it is for all our non-engineering teams. In this post, we are going to unpack caching in more detail, providing some examples, common challenges - and explaining why it's so important to get caching right.

Caching: what is it and when should you use it?

So, what is caching?

In its most simplistic form, caching is about storing data for future reference - and it’s everywhere. From the processor on your phone, to large scale architectures.

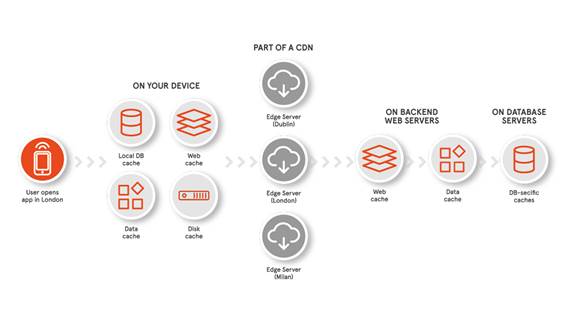

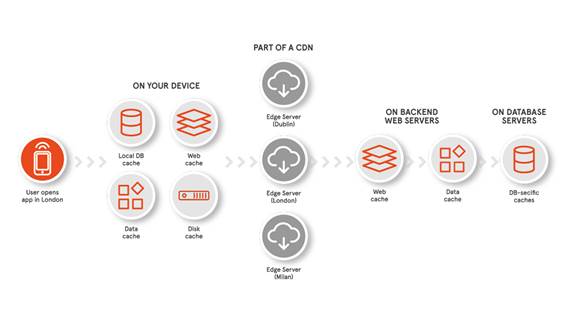

Imagine you want to access some data like a weather forecast, for example. The app on your device may have to go all the way to a backend server to retrieve and process data to inform you of the weather. However, to save on this, caches of data from previous requests exist at various touch points to speed up data retrieval, thus negating the need to traverse further through the stack.

Why we use caching

The primary reason for caching is to make access to data less expensive. This can mean either:

- Monetary costs, such as paying for bandwidth or volume of data sent, or

- Opportunity costs, like processing time that could be used for other purposes.

Fast data access is an absolute necessity and a rising expectation for today's users: serving up information to users quickly can literally be the difference between your app being deleted or not.

Caching in a typical app

As I previously mentioned, caching has touch points throughout the entire stack. Mobile devices keep the original data in a web cache, but perhaps more typical, is a cache of transformed data. Processing this can be expensive, so it makes sense for mobile to cache the results of data that has been processed.

CDNs (content delivery networks) are networks of servers in distributed locations, called ‘edge servers’. When someone makes a request, the server closest to them will return the cached data. If the CDN doesn’t have the data, further requests are made to the backend and database.

Caching in a real world example

So, how do we apply this to a real world example? Let's take our recent Met Office project - something both Will and I worked on.

When you open the app, it presents a snapshot of the weather for locations you have saved. The app will then attempt to fetch the data from a cache. If no valid cache exists on the device, this request is then passed to the closest CDN. The CDN will then check if it has a copy of the requested data and, if not, a request is made to the backend server and that response is cached.

Lastly, the backend application will preload data in memory, for fast retrieval. If the backend application doesn't have the requested data, it will make a request to Met Office internal systems to aggregate the data and cache for future requests. Future requests can now be served up much quicker to users, improving the user experience with a much shorter waiting time.

Three caching challenges to consider

As stated, caching aims to make access to data less expensive. However, this must be traded off against other considerations.

1. Balancing space and time

Caches take up space on the disk, so we have to assess whether the time we are saving is worth the amount of disk space used.

2. Keeping caches up to date

Cached data might not be the most accurate, particularly for volatile real-time data. Therefore, volatile data should not be cached.

3. Personalisation and caching aren't always compatible

To get the most out of it, we have to cache the more generic responses, and not personalise. If you try to cache one million users’ personalised responses, it is pretty similar to them just requesting the information from the backend directly anyway - so there is no tangible benefit there.

Key takeaway

Two key questions to always ask yourself are: ‘what data is going to change often?’ and ‘how critical is this piece of data?’ Users expect to see the most up-to-date pieces of information - and we believe they deserve nothing less.

When properly leveraged, caching is incredibly powerful, and it is necessary to ensure you are delivering the best experience for your customers, as well as managing the costs of your app.

I hope this quick post has been a helpful overview of the power of caching. For those of you who are interested and want to know how caching works in more depth, stay tuned for a deeper dive coming soon.